TL;DR — Key Takeaways

- Generative AIs don't cite brands randomly: they rely on specific signals (authority, structure, freshness, factual density) that your competitors may be leveraging better than you.

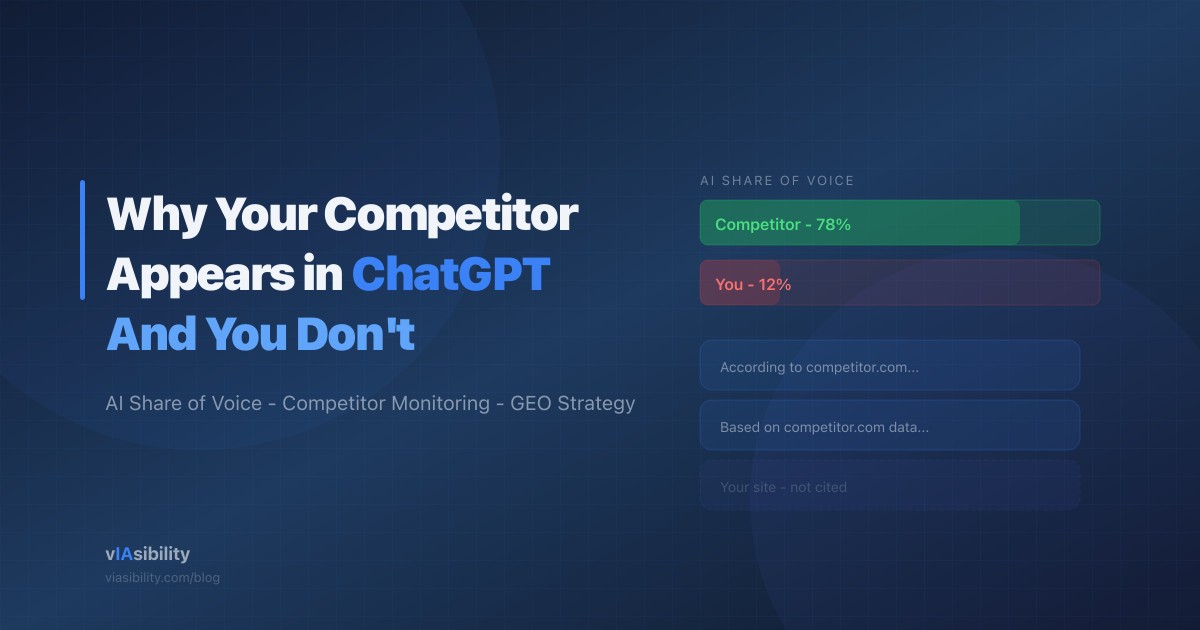

- AI share of voice — your brand's share of mentions relative to competitors in LLM responses — has become a strategic metric in 2026.

- Reverse-engineering your competitors' AI presence reveals actionable patterns: content types, structured data, external mentions.

- Regular competitor monitoring lets you detect shifts before it's too late.

The Problem: Your Competitor Is Everywhere, You're Invisible

You ask ChatGPT a question about your industry. The response cites three sources — your main competitor is one of them. You're not. You try Perplexity. Same result. Gemini? Same. This isn't a bug: it reflects an AI visibility gap you've probably never measured.

This phenomenon affects the majority of businesses today. As Idriss Khouader explains in an analysis for Blog du Modérateur, for a given prompt, only 6% of sources used by ChatGPT and Perplexity overlap — and visibility in AI responses depends on signals very different from Google rankings. Traditional SEO ranking is a necessary but no longer sufficient condition. AIs add an extra selection layer — and that's where your competitors are gaining the edge.

What Is AI Share of Voice?

AI share of voice measures how often your brand, website, or content is cited in generative engine responses relative to your competitors. It's the GEO equivalent of "share of voice" in advertising or "share of search" in SEO.

In practice, if you query ChatGPT, Perplexity, and Gemini on 50 key queries in your industry and your competitor is cited 35 times versus 8 for you, their AI share of voice is four times higher than yours. This metric didn't exist two years ago. Today, it's a strategic performance indicator.

The reason it matters: traffic from generative AIs converts at an average of 14.2%, compared to 2.8% for organic Google traffic. Every competitor citation in an AI response is a potential customer lost — at a conversion rate five times higher than what you capture through Google.

How to Reverse-Engineer Your Competitors' AI Presence

The good news: AI presence is not a black box. It relies on identifiable, measurable signals. Here's how to deconstruct what your competitors are doing.

1. Identify the Queries Where They're Cited and You're Not

The first step is to build a list of 20 to 50 strategic queries for your industry — the questions your prospects are asking ChatGPT or Perplexity. Submit them systematically and note who gets cited in each response. This is tedious work manually, but tools like vIAsibility automate this monitoring continuously.

According to a Semrush study covered by Blog du Modérateur, 70% of queries submitted to ChatGPT don't fit any traditional search category — meaning most brands aren't yet targeting the right content formats for AI engines. Those who analyze these new search behaviors gain a significant informational advantage.

2. Analyze Their Content Structure

Once you've identified the queries, visit the competitor pages that get cited. Look for:

- Content architecture: Do they use question-format H2 headings? TL;DRs at the top of articles? BLUF (Bottom Line Up Front) paragraphs?

- Structured data: Inspect their JSON-LD (via Google's testing tool or by viewing the source code). Do they have

Article,FAQPage,Organizationschemas that you don't? - Statistics and citations: Count the number of data points and cited sources per article. The Princeton GEO research showed this is the #1 citability factor.

3. Map Their External Mentions

AIs don't just read websites: they rely on the entire web to assess a source's authority. Check:

- Reddit: Is your competitor mentioned in relevant threads? Reddit is one of the most frequently cited sources by LLMs.

- Industry publications: Do outlets like Search Engine Land, TechCrunch, or major industry blogs mention them?

- Wikipedia and academic sources: Presence in these corpora significantly increases the probability of being cited.

As we explain in our article on how LLMs choose their sources, models exhibit a familiarity bias: they preferentially cite sources frequently encountered in their training data and search indexes.

The 5 Most Common Reasons Your Competitors Get Cited and You Don't

1. Their Content Is Structured for Extraction

The "wall of text" format is invisible to LLMs. Your competitors likely use descriptive headings, bullet lists, comparison tables, and direct answers at the beginning of each section. This format is recommended by SEO experts to maximize AI citability — notably through the RAG (Retrieval-Augmented Generation) mechanism by which ChatGPT and Perplexity retrieve and synthesize content.

2. They Have More Complete Structured Data

A well-implemented JSON-LD with Organization, Article, FAQPage, and BreadcrumbList schemas gives retrieval systems immediately exploitable structured context. If your competitor has comprehensive JSON-LD and you don't, they start with a direct technical advantage.

3. Their Content Is Fresher

Retrieval systems explicitly weight freshness. An article updated in 2026 with a recent dateModified will be preferred over similar content with no visible update since 2024. This asymmetry is particularly pronounced on Perplexity, which strongly favors recent content.

4. They Have More External Mentions

Backlinks and mentions on Reddit, LinkedIn, YouTube, and industry publications create an authority signal that search indexes (Bing for ChatGPT, Google for Gemini) pass through to the ranking of retrieved documents. A competitor with 50 relevant external mentions will have vastly superior AI coverage compared to a site with equivalent content but no distribution.

5. They Target the Right Conversational Queries

SEO targets keywords. GEO targets questions. Your competitors may be producing content that directly answers the conversational phrasing used in chatbots — longer, more natural queries, often in complete question form. According to the Semrush study on ChatGPT Search, prompts without the Search feature enabled average 23 words — compared to 4.2 words with Search — meaning queries are far longer and more detailed than those typed into Google.

How to Regain Ground: A 4-Step Action Plan

Step 1: Audit Your Current Position

Before fixing anything, measure. Identify your 30 most strategic queries and submit them to ChatGPT, Perplexity, and Gemini. Systematically note who gets cited. Calculate your AI share of voice against your three main competitors. This is your baseline.

Step 2: Close the Technical Gaps

Implement missing structured data. Add dateModified to your articles. Structure your content with question-format H2 headings and direct answers in the first sentence. These technical adjustments are quick to deploy and have measurable impact within weeks.

Step 3: Produce High Factual-Density Content

Create or update your key content by integrating at least one sourced statistic per section. Cite primary sources. Adopt the BLUF format. This is what distinguishes content that an AI will reformulate from content it will ignore.

Step 4: Set Up Continuous Monitoring

AI presence shifts rapidly. A competitor can go from zero to top-cited within a single content update. Monthly — or ideally weekly — monitoring of your AI share of voice lets you detect movements and react before the gap widens.

Conclusion

Visibility in generative AIs is not a game of luck — it's a game of signals. Your competitors who appear in ChatGPT aren't there by chance: they check the right boxes in terms of structure, authority, freshness, and factual density.

The good news: these signals are identifiable, measurable, and improvable. By combining competitive auditing, technical optimization, and regular monitoring, you can not only catch up to your competitors but surpass them — provided you act before the gap becomes structural.

AI share of voice is the metric that will matter over the next 18 months. The question is no longer whether your prospects consult AIs to make decisions — it's who they find in the answers.