TL;DR — Key Takeaways

- LLMs don't rank pages like Google — they predict the most useful text to include in a response, and attribute it to sources they've seen during training or retrieved in real time.

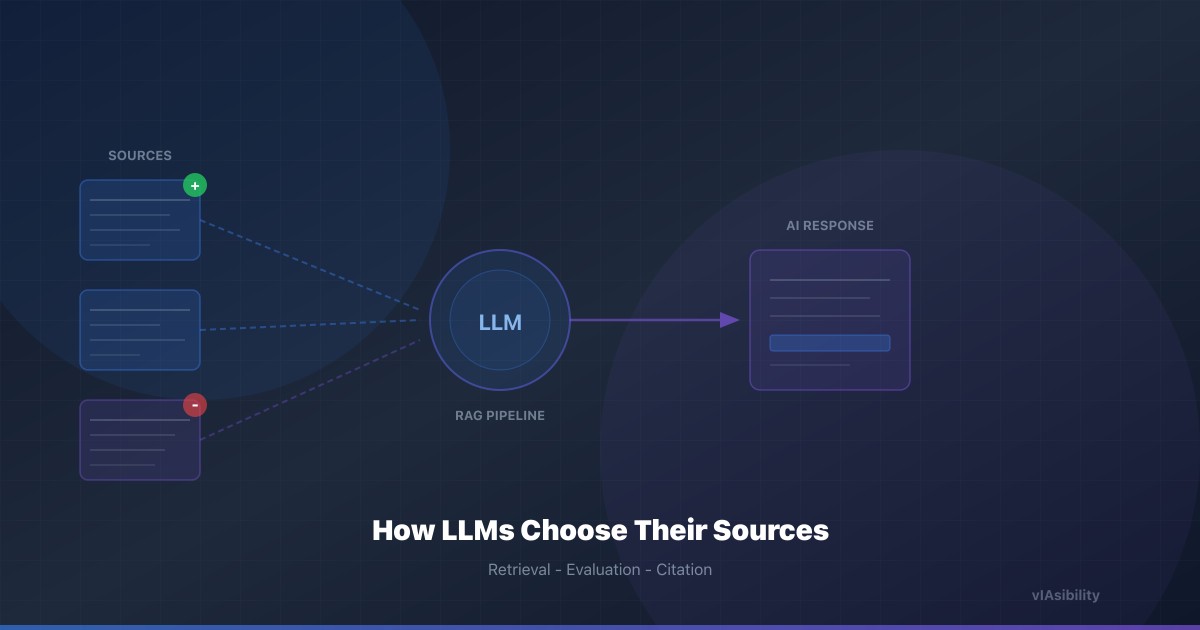

- Retrieval-Augmented Generation (RAG) is now the dominant architecture for grounded AI answers: the model searches an external index, retrieves candidate passages, and synthesizes them.

- Position in the retrieved context, passage clarity, factual density, and source authority all influence whether your content gets cited.

- Academic research from 2024–2025 quantifies these effects — and provides a concrete playbook for content creators.

Why This Matters Right Now

The conversation around GEO often focuses on what to optimize — structured data, factual tone, freshness — without explaining why these techniques work at the model level. That's a problem, because understanding how LLMs select sources lets you make better decisions about content architecture, not just content formatting.

In early 2026, the landscape has shifted dramatically. ChatGPT with search now accounts for roughly 78% of all AI-referred web traffic, and Perplexity generates 15%. Google's AI Overviews appear on over 30% of US desktop queries, up from 13% a year ago. These systems all share a common trait: they retrieve external content and synthesize it into a response. Understanding the retrieval and synthesis pipeline is therefore key to being cited.

The Two Paths to Being Cited by an LLM

There are fundamentally two ways your content can end up in an AI-generated response:

1. Parametric Knowledge (Training Data)

The model has "memorized" your content during pre-training. This happens when your pages were included in the training corpus — typically Common Crawl, Wikipedia, or other large-scale web scrapes. Information used this way is often paraphrased without explicit attribution.

The problem: you have almost no control over this process. Training data is selected months to years before the model is deployed. You can't retroactively insert your 2026 content into a model trained on 2024 data.

2. Retrieved Knowledge (RAG)

This is where you have direct leverage. In Retrieval-Augmented Generation (RAG), the model queries an external search index in real time, retrieves relevant documents, and uses them as context for generating its response. This is how ChatGPT with search, Perplexity, Google AI Overviews, and Bing Copilot work.

A 2024 study by Asai et al. ("Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection") showed that modern RAG systems don't blindly copy retrieved passages — they evaluate relevance, consistency, and factual grounding before incorporating information. This means the quality and structure of your content directly influence whether it gets used.

How RAG Systems Evaluate Your Content

When a user asks a question to a search-augmented LLM, here's what happens behind the scenes:

Step 1: Query formulation. The model converts the user's question into one or more search queries. These are often reformulated — a conversational question like "what's the best way to get cited by AI" might become a structured query like "GEO optimization AI citation techniques 2026."

Step 2: Retrieval. The search backend (Bing for ChatGPT and Copilot, Google for Gemini, a proprietary index for Perplexity) returns a ranked list of candidate documents. This initial ranking is based on traditional information retrieval signals — keyword relevance, domain authority, freshness, and page structure.

Step 3: Passage extraction. The model doesn't ingest entire web pages. It extracts the most relevant passages — typically 200–500 tokens each. This is where content structure matters enormously. As we detail in our article on GEO vs SEO, content with clear headings, question-format H2s, and front-loaded answers gets extracted more reliably.

Step 4: Synthesis and attribution. The model generates a response by weaving together information from multiple retrieved passages. At this stage, it decides which sources to cite, which to paraphrase without attribution, and which to discard entirely.

What Makes a Source "Citable"? Five Key Factors

Recent research has identified the specific characteristics that make content more likely to be cited in AI-generated responses.

1. Passage Clarity and Self-Containment

A 2024 paper by Cuconasu et al. ("The Power of Noise: Redefining Retrieval for RAG Systems") found that adding irrelevant or noisy documents to the retrieval context significantly degrades response quality — and that models learn to "skip" passages they find confusing or ambiguous.

The implication: your content must be self-explanatory within each section. A paragraph that assumes context from elsewhere on the page, or that requires visual layout to understand (tables formatted as images, meaning conveyed through color coding), will be overlooked in favor of a clearer competitor.

Actionable: write each H2 section as if it could be read in isolation. Include enough context in the opening sentence that a reader (or model) can understand the point without scrolling back up.

2. Factual Density and Verifiable Claims

The Princeton study on GEO (Aggarwal et al., 2023) demonstrated that Statistics Addition and Quotation Addition are the two most effective techniques for improving visibility in generative engine responses, boosting citation rates by up to 40%.

But why do these work at the model level? Because LLMs with retrieval capabilities are increasingly trained with reward models that penalize hallucination. A passage that provides a precise, verifiable claim — "conversion rates from AI-referred traffic average 14.2% vs. 2.8% for organic search" — gives the model a low-risk fact it can include directly. Vague claims like "AI traffic converts much better" offer no such safety net.

Actionable: aim for at least one quantitative, sourced claim per H2 section. Link to the primary source (academic paper, industry report, official documentation) so the retrieval system can verify the claim's origin.

3. Position Effects: The Primacy Bias

A fascinating finding from Liu et al. (2024) ("Lost in the Middle: How Language Models Use Long Contexts") is that LLMs exhibit a strong primacy bias: information at the beginning (and to a lesser extent, the end) of the context window gets disproportionate attention. Information in the middle tends to be "lost."

This has two implications for content creators:

- Within your page: front-load your key information. The TL;DR block, the first paragraph, and the first sentence after each heading are your highest-value real estate.

- Within search results: ranking higher in the retrieval results means your content appears earlier in the model's context window. This links back to SEO fundamentals — domain authority and traditional ranking signals still matter because they determine your position in the retrieved context.

4. Source Authority and Domain Reputation

LLMs don't have a "trust score" database like Google's PageRank. But the retrieval systems they rely on do. When ChatGPT uses Bing for search, Bing's authority signals (backlinks, domain age, brand mentions) determine which pages get retrieved in the first place.

Additionally, research from Vu et al. (2023) showed that LLMs tend to preferentially cite sources that appear frequently in their training data. Sites like Wikipedia, Stack Overflow, and major news outlets benefit from a compounding advantage: they were well-represented in training data, so the model "knows" them, and they tend to rank well in retrieval, so they appear prominently in the context.

For smaller or newer sites, this means the path to citation credibility often runs through external mentions. Being discussed on Reddit, cited in industry publications, or referenced in Wikipedia's own sources — these are all signals that help a model "recognize" your domain as authoritative. Building topical authority through content clusters is the on-site equivalent of this strategy.

5. Content Freshness and Update Signals

Retrieval systems weight freshness explicitly. Bing, Google, and Perplexity all factor in publication dates and last-modified headers when ranking candidate documents. A 2025 Botify analysis confirmed that content updated within the last 90 days receives significantly more AI citations than older content on the same topic.

This is why we recommend displaying a clear dateModified in your HTML metadata and structured data, and using IndexNow to notify search engines of updates immediately. The faster your updated content enters the retrieval index, the sooner it can be cited.

The Attribution Pipeline: How Models Decide What Gets a Link

Not all information used by an LLM gets attributed. Understanding the attribution pipeline helps you optimize for explicit citation, not just passive use.

Perplexity is the most citation-friendly platform. Its architecture requires explicit source attribution for every factual claim, generating numbered citations inline. According to Perplexity's own documentation, the system prioritizes sources that provide direct, concise answers to the specific query — which aligns with the "direct answer" content format.

ChatGPT with search provides inline citations with links, but is more selective. Observations from monitoring data suggest it preferentially cites:

- Sources that directly answer the question (not tangential mentions)

- Sources with strong domain authority in Bing's index

- Sources that provide unique data or analysis not available elsewhere

Google AI Overviews cite sources from Google's own organic results. As documented by Ahrefs, 92.36% of cited URLs come from the top 10 organic results for the query. This means traditional SEO excellence is a prerequisite for AI Overview citation.

Gemini uses Google Search grounding when enabled, following a pattern similar to AI Overviews. For ungrounded responses, it relies on parametric knowledge — making training data presence the dominant factor.

A Research-Backed GEO Checklist

Based on the research cited above, here's a prioritized checklist for maximizing your chances of being cited by generative AIs:

-

Structure for extraction: use question-format H2 headings, BLUF paragraphs, and self-contained sections. Each section should be independently understandable.

-

Maximize factual density: include at least one verifiable statistic or sourced citation per section. Link to primary sources (academic papers, industry reports, official docs).

-

Implement comprehensive JSON-LD:

Article,Organization,FAQPage, andBreadcrumbListschemas give retrieval systems structured context about your content. -

Front-load key information: place your most important claims in the first 200 words of the article, the first sentence after each heading, and in a TL;DR block at the top.

-

Maintain freshness signals: update key articles quarterly, display

dateModifiedin HTML and structured data, and use IndexNow for instant re-indexing. -

Build domain authority for retrieval: earn backlinks, cultivate mentions on Reddit and industry platforms, and build internal content clusters that demonstrate topical authority.

-

Test across multiple platforms: monitor your citation presence on ChatGPT, Perplexity, Gemini, and Google AI Overviews separately. Each platform has different retrieval biases and citation patterns.

What's Coming Next: Agentic Search and Multi-Step Retrieval

The next evolution is already underway. Models like OpenAI's GPT-5 and Google's Gemini 2.5 are moving toward agentic search — where the model performs multiple rounds of retrieval, refining its queries based on intermediate results. OpenAI's Deep Research exemplifies this pattern: a single user question triggers dozens of searches, cross-referencing results across sources before generating a synthesis.

For content creators, this means:

- Depth matters more than breadth: agentic search will find specialized, detailed content that wouldn't surface in a single-query retrieval. Deep, authoritative content on narrow topics becomes more valuable.

- Consistency across pages matters: if your site provides contradictory information across different pages, agentic search will detect the inconsistency and may penalize your credibility.

- Structured data becomes navigation: when a model is "browsing" your site across multiple retrieval steps, well-organized JSON-LD, internal links, and clear site architecture help it understand the relationships between your pages.

Conclusion

Being cited by an LLM isn't random, and it isn't solely a function of your Google ranking. It's the result of a pipeline — retrieval, extraction, evaluation, synthesis, attribution — where each step rewards specific content characteristics.

The research is clear: semantic clarity, factual density, self-contained sections, source authority, and content freshness are the five pillars that determine whether your content gets cited. These aren't abstract principles — they map directly to measurable content attributes that you can audit and improve systematically.

The brands that understand this pipeline today are building the foundation for AI visibility in an era where SEO and GEO are becoming inseparable. Your content isn't just competing for Google's first page anymore — it's competing for a spot in the model's response.